New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

context: recursive implementation of Context.Value triggers stack resize #47292

Comments

|

Change https://golang.org/cl/335790 mentions this issue: |

|

Change https://golang.org/cl/335789 mentions this issue: |

|

It seems to me that a context chain 12 long is the anomaly here. Why is it so long? That, to me, is the real problem here. We shouldn't rewrite every recursive call because in some cases the stack can grow. That removes recursion as a tool, and recursion is too elegant a tool to be castigated. I am not in favor of going down this road. |

|

I'm not sure how to measure the lengths of context chains in my own projects, but don't find 12 to be an anomaly at all; contexts are frequently used for tracing/metrics/logging, where the length of the chain corresponds to the number of interesting calls and logged values. Throw in some WithTimeout/WithDeadline/WithCancel, and it's definitely above 12 on average for my service. |

|

Good to know. Thanks. I still feel that rewriting library code to avoid recursion because it's sometimes expensive is not wise practice. Certainly not a precedent to establish. By the way, the screenshot link above is Google-internal, I think. |

|

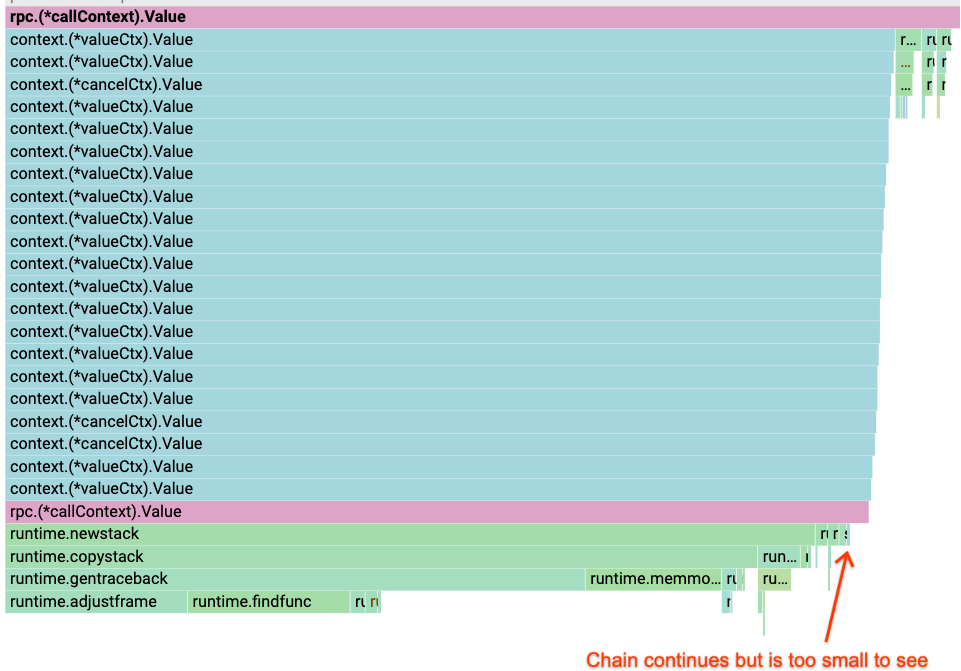

The In the case of the server I am talking about, I don't think the application logic creates any of the value contexts -- they are all created at the framework level. A cropped view of the flame graph: |

|

I have been pointed to other services with chains of over 50. |

|

I managed to print a context for a server which handles HTTP traffic where the length of the chain was 19:

So the standard library "owns" 8 out of 19 links in the chain. |

|

There doesn't seem to be a natural limit to the size of a context chain, and it's clear that long chains occur in practice. While I agree that we shouldn't avoid recursion as a general principle, it doesn't seem like a recursive traversal of an arbitrarily-long linked list is the obviously correct approach. |

|

It's correct, just not the fastest. I am persuaded that a careful iterative approach is warranted here, provided it does not encourage a broad rewrite of recursive code in the libraries. Still, it bothers me that a depth of 12 or 19 or even 50 is considered problematic for recursion, especially in Go, which handles deep recursion better than many languages. |

That is because it resizes the stack rather than hitting a stack overflow. For long lived goroutines the resize cost is amortized so does not matter. But for short lived goroutines that cost can be significant. Off topic: What I would really like is to have the option of starting sensitive goroutines with an initial stacksize chosen based on past history. Doing that might look like One could build such a runner if there was a |

|

See: #18138 |

|

The CL https://go-review.googlesource.com/c/go/+/335790 has two thumbs-up reviews and one thumbs-up for trust. Is there anything that is holding it back from merging? |

As discussed in #33283, Go's implementation or valueCtx.Value is recursive which means that the cost grows linearly with the depth of the context chain.

But one issue not discussed there is that when the chain is long enough it will trigger an expensive stack resize for short lived goroutines. This is something that we see in production: according to pprof we have a server that spends 2.3% of CPU time in runtime.newstack for stacks with a chain of 12 or more Context.Value calls. (https://screenshot.googleplex.com/AE9xKYYfQ6ijJeK.)

With an iterative implementation, long context chains should be less problematic.

https://go-review.googlesource.com/c/go/+/210697 has a proposed iterative implementation originally created in 2018. I ended up writing another one before I discovered that:

The text was updated successfully, but these errors were encountered: